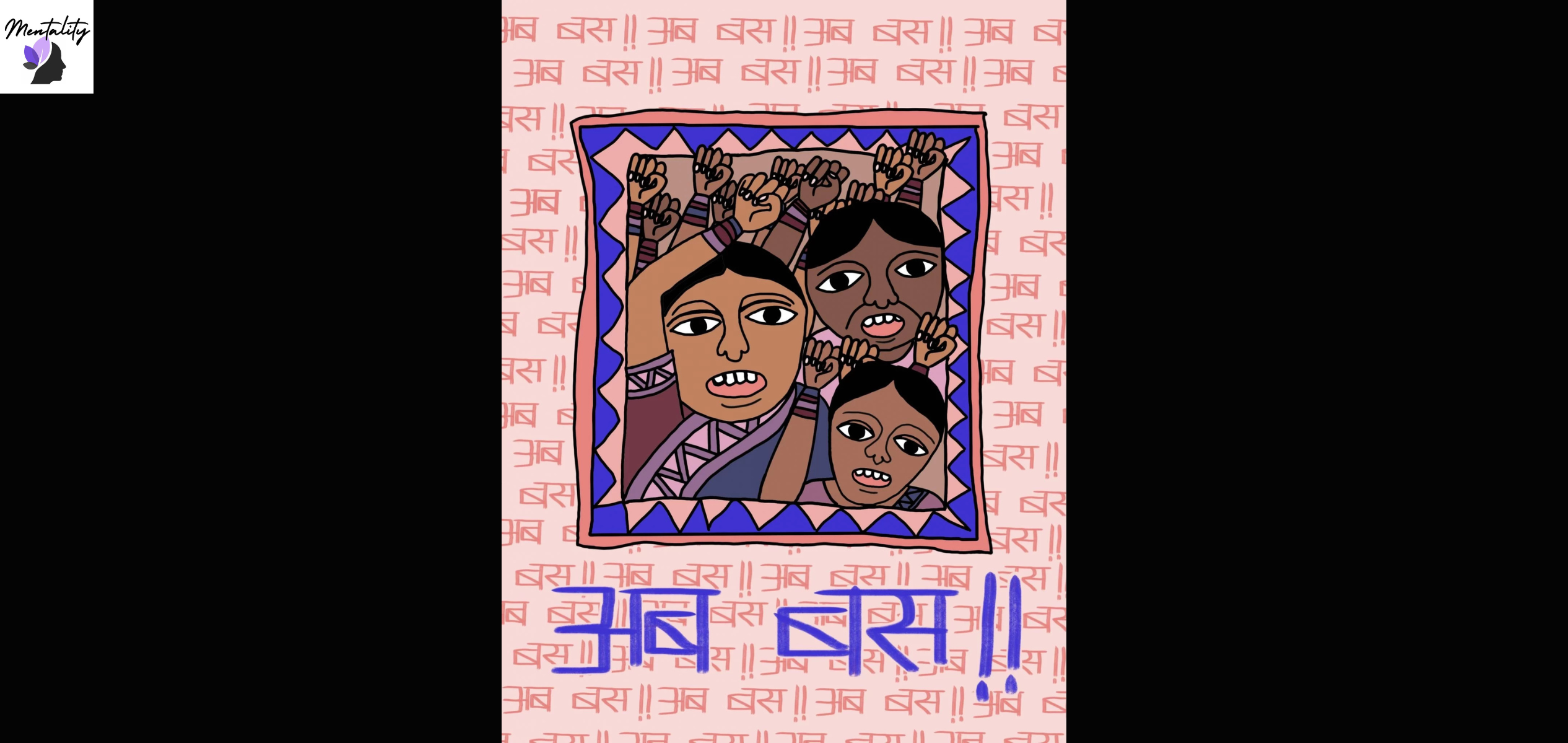

‘Ab Bas’ (Now, Enough!) (Image Credit: Sunidhi Kothari/Feminism In India)

By Vibha Singh

When victims see their perpetrators moving freely, even years after the incident, the victims often experience panic attacks, intrusive memories, breathlessness, and trembling. .

In March 2024, 21-year-old Sandhya was to graduate in two months. Just a few more assignments and she would hold an arts degree from a college in South Mumbai. Stress and pressure took over, just as it does to millions of students every year around the end of the academic year.

As she clicked a link while doing an internal assignment for her Artificial Intelligence paper, she froze. She asked us to keep her identity hidden.

Nude photos of her popped up on the screen; she said she felt nailed to her chair. “The initial shock gave way to waves of anger, fear, and shame,” she said.

A few months later, in October 2024, Manisha Kamble, another student in a Mumbai college befriended a man online. The friendship turned into a romance. In June 2025, she found out that he was already married. As soon as she called off the relationship, her nude images were found on her college WhatsApp groups, and several classmates received lewd messages from her social media account.

Sandhya was alone at home when she saw her own photos online, which was partly anxiety-inducing and partly-relieving, she said. “I was really scared about how my family would react,” she added.

Kamble initially decided to hide it from her family. When she eventually told her parents, they forbade her from going out. “I was not able to sleep for days,” she said.

In mid-July, Kamble saw her images on social media saying she was sexually available. Some of the images were uploaded to pornographic websites and chat groups, she said. She was doxxed and began receiving sexually explicit calls and messages from strangers every day. “It felt like my life had been taken over by them,” she said. “My phone would ring continuously: sometimes thirty to fifty unknown calls a day.” Kamble decided to ignore all calls altogether.

“These acts go beyond privacy violations,” said Bhushan M Shinde, assistant professor and Internal Quality Assurance Cell coordinator, Vivekanand Education Society College of Law, Mumbai. “They are a form of digital sexual assault that often lead to lifelong trauma.”

That keeps the stress system stuck on high alert, leading to anxiety, panic, sleeplessness, shame, and a constant fear that the content might pop up again anytime, he added.

Leaked photos and image morphing

In November 2025, actress Girija Oak-Godbole took to social media to politely ask for AI-generated digitally morphed videos of her to be taken down. “I am fully aware that I cannot do anything about it,” she said, other than to request those who watch such videos to not. “You are part of the problem.”

She said her 12-year-old son will eventually have access to social media and see those images as they will remain on the internet forever. “This game has no rules,” she said.

What happened to Oak-Godbole falls under the larger umbrella of image-based abuse, which is the creation or sharing of intimate or identity-defining images without consent; this includes AI face-swaps and “nudes,” deepfakes, edited screenshots, and impersonation accounts.

In November 2023, actress Rashmika Mandanna’s face was morphed in an Instagram video, which went viral. “If this happened to me when I was in school or college, I genuinely can’t imagine how could I ever tackle this,” she posted on X.

After Mandanna’s leaked fake video, the Indian government issued regulations to social media platforms to mandatorily take down non-consensual intimate imagery or, specifically, non-consensual deepfakes within two hours of having been flagged.

Ninety-two per cent of women reporting deepfake abuse are ordinary women, not celebrities, according to a 2025 report based on cases submitted to Meri Trustline, a helpline by the Rati Foundation.

According to a 2025 study by Equality Now and Breakthrough, women rarely go to the police for non-consensual image circulation because of stigma. Survivors often prioritise removing the content from online platforms rather than pursuing criminal complaints, it reads, exposing serious gaps in India’s legal and cybercrime response mechanisms.

Changing the narrative

Shifting the blame away from the victim goes a long way, said Sadia Saeed, founder and chief psychologist at Inner Space, a leading therapy platform. “It is never the victim’s fault. It’s just a picture, a body—why feel ashamed of something you didn’t do?”

Kamble’s college counsellor asked her to report the incident to the respective platforms and deactivate her social media accounts to avoid further harassment. The counsellor put her in touch with police officials who go to colleges to conduct awareness sessions. All of this changed the narrative for her. “I felt why should I disappear from the internet when he is the one committing the crime?”

Sandhya is learning to deal with it, with the help of family and friends. “You must speak up, seek help, and never blame yourself,” she said.

“I am now planning to study cyber law so that I can help many like me who suffer in silence,” said Kamble.

Author: Vibha Singh

Vibha Singh is a Mumbai-based journalist and a media educator. She is the founder of Women Against Cyber Abuse and India Solutions Desk.